Microsoft Maia 200 AI chip launch enhances AI inference performance, challenges Nvidia’s dominance, and supports Azure services, making it crucial for tech and competitive exam preparation.

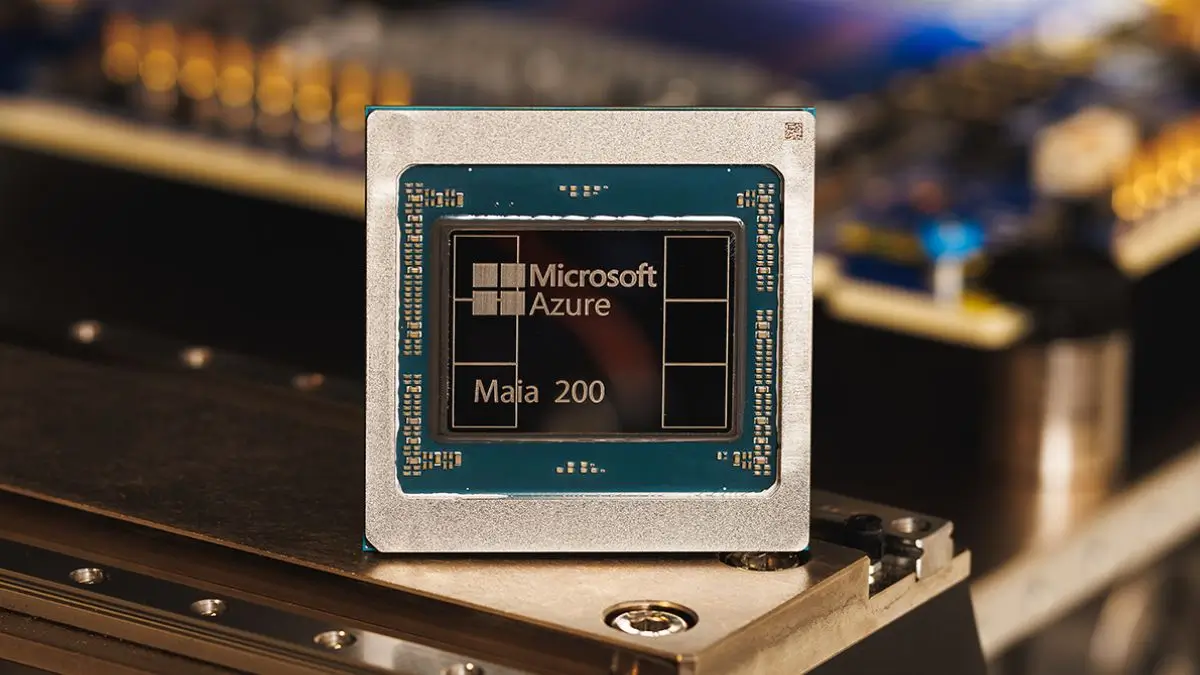

Microsoft Unveils Maia 200 AI Chip: A New Frontier in AI Hardware Innovation

Introduction: Microsoft’s Strategic AI Hardware Move

Microsoft recently unveiled its second-generation in-house artificial intelligence (AI) chip known as Maia 200, designed to close the performance gap with industry leader Nvidia and reduce reliance on external chipmakers. The announcement marks a significant step in Microsoft’s efforts to build advanced custom silicon for large-scale AI workloads.

What Is Maia 200 AI Chip?

Maia 200 is an AI accelerator built specifically for AI inference workloads—where trained AI models generate responses to user queries. It’s part of Microsoft’s broader strategy to vertically integrate hardware and software for more efficient AI services across its cloud platforms, especially Azure.

Cutting-Edge Technical Features

The Maia 200 is fabricated using a 3-nanometer technology node by TSMC, enabling extremely dense circuitry that supports more than 140 billion transistors. The chip delivers over 10 petaFLOPS of performance at 4-bit precision (FP4) and more than 5 petaFLOPS at 8-bit precision (FP8)—key measures of AI computational capability.

Equipped with 216 GB of high-bandwidth HBM3e memory working at roughly 7 TB/s bandwidth and 272 MB of on-die SRAM, Maia 200 can feed AI models with data extremely fast, reducing delays from data movement.

Optimized for Large-Scale AI Services

Unlike general-purpose chips, Maia 200 is optimized for inference—running AI models efficiently at production scale. It supports major AI applications such as GPT-5.2 models, Microsoft 365 Copilot, and Microsoft Foundry services, enabling faster, more cost-effective processing of complex AI tasks.

Microsoft’s announcement included software support, including tools similar to Nvidia’s CUDA platform. This software ecosystem, including frameworks like Triton, helps developers program the Maia architecture effectively, increasing its usability in cloud environments.

Strategic Industry Implications

Maia 200 addresses a key industry challenge—dependence on Nvidia chips, which hold a dominant share of the AI hardware market. By developing its own silicon, Microsoft and other cloud providers can better manage costs, supply shortages, and infrastructure scalability.

Despite this in-house innovation, Microsoft has confirmed it will continue purchasing chips from Nvidia and AMD where needed, recognizing the ongoing role of external suppliers alongside custom silicon.

Why This News Is Important for Government Exam Aspirants

Relevance to Technology and Economy Sections

Understanding the Maia 200 AI chip launch is relevant for the Science & Technology and Economic Development sections of competitive exams like UPSC, PSCs, SSC, and banking exams. It reflects how global tech firms are innovating to solve real-world challenges related to artificial intelligence, cloud computing, and data-center scalability.

Link to India’s Digital and AI Strategy

This development also intersects with India’s digital transformation goals under initiatives like Digital India where AI and advanced computing are prioritised for governance and public services. Tracking global trends helps aspirants to relate technological shifts to policy, economic impacts, and strategic competition.

Industry Competition & Market Dynamics

The competition between tech giants such as Microsoft, Nvidia, Google, and Amazon illustrates global technological rivalry that shapes markets, job opportunities, and innovation direction. For candidates preparing for economics or current affairs segments, recognising these trends is crucial.

Historical Context: Evolution from GPUs to Custom AI Chips

Rise of AI Hardware – GPUs and CUDA

Initially, Graphics Processing Units (GPUs) dominated AI computation due to their parallel processing ability. Nvidia’s CUDA software ecosystem became widely adopted, leading to Nvidia’s significant share of the AI hardware market.

Emergence of Custom AI Silicon

As AI models grew more complex, demand for more efficient and specialised hardware increased. Cloud providers like Google (TPU) and Amazon (Trainium) introduced their own AI chips to reduce dependence on Nvidia. Microsoft first entered this space with the Maia series in 2023 and has now advanced to Maia 200 with improved performance.

Significance of Vertical Integration

The shift toward in-house silicon reflects broader industry strategies where major firms integrate hardware and software stacks to enhance performance, control costs, and protect supply chains. This trend has implications across sectors from consumer devices to enterprise AI services.

Key Takeaways from “Microsoft Unveils Maia 200 AI Chip”

| S.No. | Key Takeaway |

|---|---|

| 1 | Microsoft has launched the Maia 200 AI chip to challenge Nvidia’s dominance in AI hardware. |

| 2 | The Maia 200 uses advanced 3 nm technology, delivering over 10 petaFLOPS of FP4 performance. |

| 3 | The chip features 216 GB HBM3e memory and 272 MB SRAM for high-speed data access. |

| 4 | Software tools like Triton aim to rival Nvidia’s CUDA ecosystem. |

| 5 | Microsoft will still use Nvidia and AMD chips where needed while expanding its own silicon deployments. |

FAQs – Frequently Asked Questions

1. What is the Microsoft Maia 200 AI chip?

The Maia 200 is Microsoft’s second-generation custom AI chip designed for large-scale AI inference workloads. It is aimed at competing with Nvidia and reducing dependency on external GPU suppliers.

2. What are the technical specifications of the Maia 200 chip?

The Maia 200 is built on 3nm technology, features over 140 billion transistors, delivers 10 petaFLOPS FP4 and 5 petaFLOPS FP8, with 216 GB HBM3e memory and 272 MB SRAM, optimized for AI model processing.

3. How does Maia 200 compare with Nvidia GPUs?

While Nvidia GPUs dominate AI processing, Maia 200 is Microsoft’s attempt to offer a custom solution for cloud AI workloads with vertical integration of hardware and software, including support for frameworks like Triton.

4. What applications will use the Maia 200 AI chip?

It is primarily designed for AI inference in Azure, powering services like GPT models, Microsoft 365 Copilot, and other enterprise AI solutions.

5. Will Microsoft stop using Nvidia or AMD chips?

No, Microsoft will continue to purchase Nvidia and AMD chips where necessary, while deploying Maia 200 for internal AI services to improve efficiency and reduce dependency.

6. Why is Maia 200 significant for global AI competition?

It reflects the trend of tech giants developing custom AI silicon to control costs, enhance performance, and address supply chain constraints in AI hardware.

7. How does Maia 200 help in India’s AI awareness?

Understanding such innovations aligns with India’s Digital India and AI initiatives, helping aspirants link global tech trends to policy, economy, and digital infrastructure.

8. Which precision formats does Maia 200 support?

The chip supports FP4 (4-bit) and FP8 (8-bit) precision, allowing efficient AI computations with minimal latency.

9. Who manufactures Maia 200?

The chip is fabricated by TSMC (Taiwan Semiconductor Manufacturing Company) using advanced 3nm processes.

10. What software ecosystem supports Maia 200?

Microsoft provides a Triton-based software stack, similar to Nvidia’s CUDA, enabling developers to program AI workloads efficiently.

Some Important Current Affairs Links